![2016-12-14-01_22_32]()

Today, we will introduce Apache Mesos, an open source distributed computing system with the target to allow applications to run on a computer cluster as if it was running on a single computer. On top of a Mesos cluster, we will run Mesosphere Marathon, an open source container orchestration platform. Similar to a watchdog, Marathon helps running and maintaining long-running applications. However, unlike a mere watchdog, Marathon runs the applications in containers, and it provides a modern Web Portal and a modern RESTful API.

With the help of Marathon, we will

- run several instances of a simple “Hello World” script on the cluster (within and outside of Docker containers);

- see, what happens, if an application dies unexpectedly;

- see, what happens, if an application reservation request exceeds the available resources.

For simplicity and quick installation purposes, all components of the Mesos architecture will be run within Docker containers.

What is Mesos?

Mesos is an open source framework and provides a distributed computer system. Mesos provides applications (e.g. Hadoop, Spark, Kafka, Elasticsearch) with APIs for resource management and scheduling across entire datacenters and cloud environments.

![2016-12-14-02_28_36-mesos-marathon-architecture-google-slides]()

The Mesos Agents advertise the available resources (CPU, DRAM, …) to the master, which will relay those offers to frameworks like Marathon, Hadoop and Jenkins and many more. The frameworks may reserve all or part of the offered resources and run the application on the Mesos agents (slave).

What is Marathon?

Mesosphere, the owner of Marathon calls Marathon “a production-grade container orchestration platform for Mesosphere’s Datacenter Operating System (DC/OS) and Apache Mesos.”

Among others, it offers:

- active-standby redundancy for increased availability

- Marathon is starting containers on the Mesos Agent. Both, Mesos containers (using cgroups) and Docker containers are supported.

- It offers a powerful GUI and

- a REST API for easier integration.

A more complete feature list can be found here.

Compared to other schedulers like Apache Aurora used by Twitter, Marathon seems to be much easier to handle. On the other side, Aurora offers elaborate prioritization and preemption features. Those may be important, if the same resources are shared between production and development: if a production workload does not find any resources on a Mesos slave, Aurora will kill of less important applications in order to free up resources.

A good comparison between Mesosphere Marathon and Apache Aurora can be found on this Hootsuite Development’s web page.

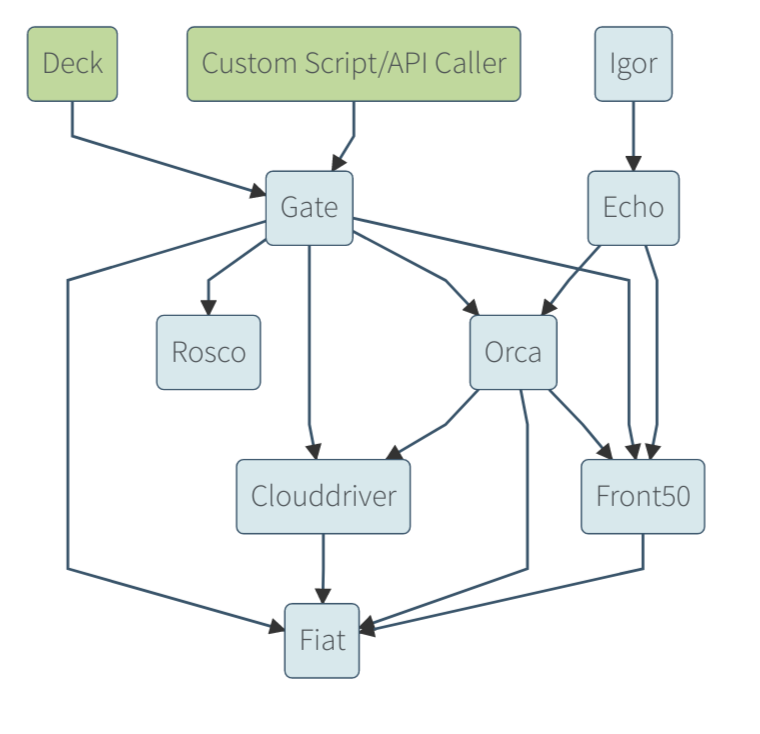

Target Configuration for this Blog Post

In this “Hello World” example, we will create a simple Mesos configuration with a Marathon framework, a single Zookeeper, a single master and a single agent (slave):

![2016-12-14-02_14_43-mesos-marathon-architecture-google-slides]()

We will also show what happens, if we kill a Marathon app.

Versions & Tools used

Prerequisites:

- >~4 GB RAM: after starting the a Mesos master, a Mesos agent (slave), a ZooKeeper and a Marathon Docker container, I have observed a DRAM usage of ~3.8 GB

Step 1: Install a Docker Host via Vagrant and Connect to the Host via SSH

If you are using an existing docker host, make sure that your host has enough free memory.

We will run the applications in Docker containers in order to allow for maximum interoperability. This way, we always can use the latest versions without the need to control the java version used.

If you are new to Docker, you might want to read this blog post.

Installing Docker on Windows and Mac can be a real challenge, but no worries: we will show an easy way here, that is much quicker than the one described in Docker’s official documentation:

Prerequisites of this step:

- I recommend to have direct access to the Internet: via Firewall, but without HTTP proxy. However, if you cannot get rid of your HTTP proxy, read this blog post.

- Administration rights on you computer.

Steps to install a Docker Host VirtualBox VM:

Download and install Virtualbox (if the installation fails with error message “<to be completed> see Appendix A of this blog post: Virtualbox Installation Workaround)

1. Download and Install Vagrant (requires a reboot)

2. Download Vagrant Box containing an Ubuntu-based Docker Host and create a VirtualBox VM like follows:

basesystem# mkdir ubuntu-trusty64-docker ; cd ubuntu-trusty64-docker

basesystem# vagrant init williamyeh/ubuntu-trusty64-docker

basesystem# vagrant up

basesystem# vagrant ssh

Now you are logged into the Docker host and we are ready for the next step: to create the Docker images.

Note: I have experienced problems with the vi editor when running vagrant ssh in a Windows terminal. In case of Windows, consider to follow Appendix C of this blog post and to use putty instead.

Step 2 (recommended): Download Docker Images

This step is optional, since the download will be done automatically with each docker run command, if the image is not available on the Docker host. However, I recommend to download the images in advance, so if you run the applications, you can observe the logs and other feedback (syntax errors) immediately.

Step 2.1 Download Zookeeper

By looking at the Mesosphere Github documentation, it seems like a Zookeeper (Exhibitor) is needed always. The only tag available as of today is the tag 1.5.2. Let us download it first:

(dockerhost)$ sudo docker pull netflixoss/exhibitor:1.5.2

1.5.2: Pulling from netflixoss/exhibitor

a3ed95caeb02: Pull complete

831a6feb5ab2: Pull complete

b32559aac4de: Pull complete

5e99535a7b44: Pull complete

aa076096cff1: Pull complete

423664404a49: Pull complete

929c1efe4d14: Pull complete

387bf8857f2e: Pull complete

5efe9ea3de0d: Pull complete

a53f74fd9d17: Pull complete

78b42a885be7: Pull complete

684d8691844e: Pull complete

Digest: sha256:9b384a431d2e231f0bd3fcda5eff20d5eabd5ba1e3361764a4834d3401fbc4d4

Status: Downloaded newer image for netflixoss/exhibitor:1.5.2

Step 2.2 Download Mesos Master

It is quite confusing, how many distributions of Mesos exist. The Docker Hub distribution from Mesoscloud seems to offer most of the stars:

$ sudo docker search mesos

NAME DESCRIPTION STARS OFFICIAL AUTOMATED

mesoscloud/mesos-master Mesos Master 50 [OK]

mesoscloud/mesos-slave Mesos Slave 31 [OK]

...

However, if I search for mesos-master, I find another image with even more stars and downloads:

$ sudo docker search mesos-master

NAME DESCRIPTION STARS OFFICIAL AUTOMATED

mesosphere/mesos-master Mesos-Master in Docker 71

mesoscloud/mesos-master Mesos Master 50 [OK]

...

Mesosphere also offers an two-in-one solution with master and slave combined in a single container: mesosphere/mesos. However, the recommended way of running Mesos is to run a master on one Docker host and slaves on other Docker hosts.

Let us download the files mesosphere/mesos-master and mesosphere/mesos-slave. Note that the “latest” tag does not exist, so we need to specify the tag explicitly. Let us try with the latest version I have found to exist for both, master and slave: i.e. 1.1.01.1.0-2.0.107.ubuntu1404.

(dockerhost)$ docker pull mesosphere/mesos-master:1.1.01.1.0-2.0.107.ubuntu1404

1.1.01.1.0-2.0.107.ubuntu1404: Pulling from mesosphere/mesos-master

bf5d46315322: Already exists

9f13e0ac480c: Already exists

e8988b5b3097: Already exists

40af181810e7: Already exists

e6f7c7e5c03e: Already exists

a3ed95caeb02: Already exists

01a862c74d96: Already exists

651b06ceb77e: Already exists

Digest: sha256:a011e002d641c6ba8361c542bd9429af721b7c7434598a9615cbd5b05511af7f

Status: Downloaded newer image for mesosphere/mesos-master:1.1.01.1.0-2.0.107.ubuntu1404

The version of the downloaded Mesos Master image can be checked with following command:

(dockerhost)$ sudo docker run -it --rm --name mesos-master mesosphere/mesos-master:1.1.01.1.0-2.0.107.ubuntu1404 --version

mesos 1.1.0

We are using version 1.1.0, currently.

Step 2.3 Download Mesos Slave

(dockerhost)$ docker pull mesosphere/mesos-slave:1.1.01.1.0-2.0.107.ubuntu1404

1.1.01.1.0-2.0.107.ubuntu1404: Pulling from mesosphere/mesos-slave

bf5d46315322: Already exists

9f13e0ac480c: Already exists

e8988b5b3097: Already exists

40af181810e7: Already exists

e6f7c7e5c03e: Already exists

a3ed95caeb02: Already exists

01a862c74d96: Already exists

651b06ceb77e: Already exists

Digest: sha256:bb75cc78c6880a2faa5307e3d8caa806105c673e9002429e60e3ae858d162999

Status: Downloaded newer image for mesosphere/mesos-slave:1.1.01.1.0-2.0.107.ubuntu1404

Step 2.4 Download Marathon

While Mesos will offer computing resources, Marathon is a framework that will ask for those resources.

(dockerhost)$ sudo docker pull mesosphere/marathon

Using default tag: latest

latest: Pulling from mesosphere/marathon

43c265008fae: Pull complete

af36d2c7a148: Pull complete

143e9d501644: Pull complete

bfc4cdbc8d81: Pull complete

38c6fc3e9968: Pull complete

0bfa8d5153bb: Pull complete

05bc8d0fffca: Pull complete

f1266a2a7ecb: Pull complete

f505e7ed4b7e: Pull complete

219f8c7fc022: Pull complete

Digest: sha256:9c881ff6f46a0da69f622a19a1677f1424a12ef37d076ec439854f15b97179fa

Status: Downloaded newer image for mesosphere/marathon:latest

Marathon does not offer a –version option or alike. The Marathon version can only be seen in the log, when running Marathon:

(dockerhost)$ sudo docker run -it --net=host -v `pwd`:/work_dir --entrypoint=bash mesosphere/marathon

root@openshift-installer:/marathon# ./bin/start --master local --zk zk://localhost:2181/marathon --http_port=7070

MESOS_NATIVE_JAVA_LIBRARY is not set. Searching in /usr/lib /usr/local/lib.

MESOS_NATIVE_LIBRARY, MESOS_NATIVE_JAVA_LIBRARY set to '/usr/lib/libmesos.so'

No start hook file found ($HOOK_MARATHON_START). Proceeding with the start script.

[2016-12-12 21:01:46,268] INFO Starting Marathon 1.3.6/unknown with --master local --zk zk://localhost:2181/marathon --http_port=7070 (mesosphere.marathon.Main$:main)

Step 3: Run Mesos

In this step, we will run Mesos Master interactively (with -it switch instead of -d switch) to better see, what is happening. In a productive environment, you will use the detached mode -d instead of the interactive terminal mode -it.

We have found out by analyzing the Dockerfiles of mesos-master and mesos-slave images that both are based on mesosphere/mesos with different entrypoints and commands:

- mesosphere/mesos-master: entrypoint:

mesos-master with default option --registry=in_memory

- mesosphere/mesos-slave: entrypoint:

mesos-slave with no default options

Interestingly, the Dockerfiles have no exposed ports specified. The reason is, that the Docker images are supposed to run in host network mode, and by that, they are sharing the interface including IP address(es) and Ports with the Docker host.

The usage of the docker images is documented on Github. It seems like a zookeeper is needed:

Step 3.1: Run Zookeeper (Exhibitor) interactively in a Container

In order to better see, what is happening, we will run the Zookeeper in interactive terminal (-it) mode instead of detached mode (-d), as described in the documentation. With the --nat=host option, we share the network with the Docker host, so we do not need to explicitly expose the used TCP ports:

(dockerhost)$ sudo docker run -it --net=host --name=zookeeper netflixoss/exhibitor:1.5.2

v1.0

INFO com.netflix.exhibitor.core.activity.ActivityLog Exhibitor started [main]

Dec 12, 2016 5:05:28 PM java.util.prefs.FileSystemPreferences$1 run

INFO: Created user preferences directory.

INFO org.mortbay.log Logging to org.slf4j.impl.Log4jLoggerAdapter(org.mortbay.log) via org.mortbay.log.Slf4jLog [main]

INFO org.mortbay.log jetty-1.0 [main]

Dec 12, 2016 5:05:29 PM com.sun.jersey.server.impl.application.WebApplicationImpl _initiate

INFO: Initiating Jersey application, version 'Jersey: 1.9.1 09/14/2011 02:36 PM'

INFO org.mortbay.log Started SocketConnector@0.0.0.0:8080 [main]

INFO com.netflix.exhibitor.core.activity.ActivityLog State: down [ActivityQueue-0]

Dec 12, 2016 5:05:30 PM java.util.prefs.FileSystemPreferences$6 run

WARNING: Prefs file removed in background /root/.java/.userPrefs/prefs.xml

INFO com.netflix.exhibitor.core.activity.ActivityLog Attempting to stop instance [ActivityQueue-0]

INFO com.netflix.exhibitor.core.activity.ActivityLog Attempting to start/restart ZooKeeper [ActivityQueue-0]

INFO com.netflix.exhibitor.core.activity.ActivityLog jps didn't find instance - assuming ZK is not running [ActivityQueue-0]

INFO com.netflix.exhibitor.core.activity.ActivityLog Starting in standalone mode [ActivityQueue-0]

ERROR com.netflix.exhibitor.core.activity.ActivityLog ZooKeeper Server: JMX enabled by default [pool-2-thread-1]

INFO com.netflix.exhibitor.core.activity.ActivityLog Process started via: /zookeeper/bin/zkServer.sh [ActivityQueue-0]

ERROR com.netflix.exhibitor.core.activity.ActivityLog ZooKeeper Server: Using config: /zookeeper/bin/../conf/zoo.cfg [pool-2-thread-1]

INFO com.netflix.exhibitor.core.activity.ActivityLog ZooKeeper Server: Starting zookeeper ... STARTED [pool-2-thread-2]

INFO com.netflix.exhibitor.core.activity.ActivityLog State: serving [ActivityQueue-0]

In the log, we see, that the ZooKeeper is using port 8080. Therefore, we will see in Step 3.3 that the TCP port for Marathon needs to be changed in order to avoid a port resource collision.

Step 3.2: Run Master interactively in a Container

Here again, we are following the documentation of the images, but we are starting the Mesos master in interactive mode in a different terminal in order to see all logs, and we use the latest version 1.1.0 instead of 0.28.0:

(dockerhost)$ sudo docker run -it --net=host \

--name mesos-master \

-e MESOS_PORT=5050 \

-e MESOS_ZK=zk://127.0.0.1:2181/mesos \

-e MESOS_QUORUM=1 \

-e MESOS_REGISTRY=in_memory \

-e MESOS_LOG_DIR=/var/log/mesos \

-e MESOS_WORK_DIR=/var/tmp/mesos \

-v "$(pwd)/log/mesos:/var/log/mesos" \

-v "$(pwd)/tmp/mesos:/var/tmp/mesos" \

mesosphere/mesos-master:1.1.01.1.0-2.0.107.ubuntu1404

WARNING: Logging before InitGoogleLogging() is written to STDERR

I1212 17:10:44.898630 1 main.cpp:263] Build: 2016-11-16 01:30:23 by ubuntu

I1212 17:10:44.898900 1 main.cpp:264] Version: 1.1.0

I1212 17:10:44.898916 1 main.cpp:267] Git tag: 1.1.0

I1212 17:10:44.898927 1 main.cpp:271] Git SHA: a44b077ea0df54b77f05550979e1e97f39b15873

I1212 17:10:44.903816 1 logging.cpp:194] INFO level logging started!

I1212 17:10:44.904436 1 main.cpp:370] Using 'HierarchicalDRF' allocator

2016-12-12 17:10:44,905:1(0x7ff4a8164700):ZOO_INFO@log_env@726: Client environment:zookeeper.version=zookeeper C client 3.4.8

2016-12-12 17:10:44,905:1(0x7ff4a8164700):ZOO_INFO@log_env@730: Client environment:host.name=openshift-installer

2016-12-12 17:10:44,905:1(0x7ff4a8164700):ZOO_INFO@log_env@737: Client environment:os.name=Linux

2016-12-12 17:10:44,905:1(0x7ff4a8164700):ZOO_INFO@log_env@738: Client environment:os.arch=4.2.0-42-generic

2016-12-12 17:10:44,905:1(0x7ff4a8164700):ZOO_INFO@log_env@739: Client environment:os.version=#49~14.04.1-Ubuntu SMP Wed Jun 29 20:22:11 UTC 2016

2016-12-12 17:10:44,907:1(0x7ff4a6961700):ZOO_INFO@log_env@726: Client environment:zookeeper.version=zookeeper C client 3.4.8

2016-12-12 17:10:44,910:1(0x7ff4a6961700):ZOO_INFO@log_env@730: Client environment:host.name=openshift-installer

2016-12-12 17:10:44,910:1(0x7ff4a6961700):ZOO_INFO@log_env@737: Client environment:os.name=Linux

2016-12-12 17:10:44,910:1(0x7ff4a6961700):ZOO_INFO@log_env@738: Client environment:os.arch=4.2.0-42-generic

2016-12-12 17:10:44,910:1(0x7ff4a6961700):ZOO_INFO@log_env@739: Client environment:os.version=#49~14.04.1-Ubuntu SMP Wed Jun 29 20:22:11 UTC 2016

2016-12-12 17:10:44,910:1(0x7ff4a6961700):ZOO_INFO@log_env@747: Client environment:user.name=(null)

2016-12-12 17:10:44,910:1(0x7ff4a6961700):ZOO_INFO@log_env@755: Client environment:user.home=/root

2016-12-12 17:10:44,910:1(0x7ff4a6961700):ZOO_INFO@log_env@767: Client environment:user.dir=/

2016-12-12 17:10:44,910:1(0x7ff4a6961700):ZOO_INFO@zookeeper_init@800: Initiating client connection, host=127.0.0.1:2181 sessionTimeout=10000 watcher=0x7ff4b16c6200 sessionId=0 sessionPasswd=<null> context=0x7ff490000930 flags=0

I1212 17:10:44.909833 11 master.cpp:380] Master 917a95ab-7b77-4316-8e52-1431a8043af3 (openshift-installer-native-docker-compose) started on 127.0.0.1:5050

I1212 17:10:44.912077 11 master.cpp:382] Flags at startup: --agent_ping_timeout="15secs" --agent_reregister_timeout="10mins" --allocation_interval="1secs" --allocator="HierarchicalDRF" --authenticate_agents="false" --authenticate_frameworks="false" --authenticate_http_frameworks="false" --authenticate_http_readonly="false" --authenticate_http_readwrite="false" --authenticators="crammd5" --authorizers="local" --framework_sorter="drf" --help="false" --hostname_lookup="true" --http_authenticators="basic" --initialize_driver_logging="true" --log_auto_initialize="true" --log_dir="/var/log/mesos" --logbufsecs="0" --logging_level="INFO" --max_agent_ping_timeouts="5" --max_completed_frameworks="50" --max_completed_tasks_per_framework="1000" --port="5050" --quiet="false" --quorum="1" --recovery_agent_removal_limit="100%" --registry="in_memory" --registry_fetch_timeout="1mins" --registry_gc_interval="15mins" --registry_max_agent_age="2weeks" --registry_max_agent_count="102400" --registry_store_timeout="20secs" --registry_strict="false" --root_submissions="true" --user_sorter="drf" --version="false" --webui_dir="/usr/share/mesos/webui" --work_dir="/var/tmp/mesos" --zk="zk://127.0.0.1:2181/mesos" --zk_session_timeout="10secs"

2016-12-12 17:10:44,909:1(0x7ff4a8164700):ZOO_INFO@log_env@747: Client environment:user.name=(null)

2016-12-12 17:10:44,913:1(0x7ff4a8164700):ZOO_INFO@log_env@755: Client environment:user.home=/root

2016-12-12 17:10:44,913:1(0x7ff4a8164700):ZOO_INFO@log_env@767: Client environment:user.dir=/

2016-12-12 17:10:44,913:1(0x7ff4a8164700):ZOO_INFO@zookeeper_init@800: Initiating client connection, host=127.0.0.1:2181 sessionTimeout=10000 watcher=0x7ff4b16c6200 sessionId=0 sessionPasswd=<null> context=0x7ff4980038a0 flags=0

W1212 17:10:44.913079 11 master.cpp:385]

**************************************************

Master bound to loopback interface! Cannot communicate with remote schedulers or agents. You might want to set '--ip' flag to a routable IP address.

**************************************************

I1212 17:10:44.914429 11 master.cpp:434] Master allowing unauthenticated frameworks to register

I1212 17:10:44.914448 11 master.cpp:448] Master allowing unauthenticated agents to register

I1212 17:10:44.914455 11 master.cpp:462] Master allowing HTTP frameworks to register without authentication

I1212 17:10:44.914474 11 master.cpp:504] Using default 'crammd5' authenticator

W1212 17:10:44.914487 11 authenticator.cpp:512] No credentials provided, authentication requests will be refused

I1212 17:10:44.914687 11 authenticator.cpp:519] Initializing server SASL

2016-12-12 17:10:44,922:1(0x7ff497fff700):ZOO_INFO@check_events@1728: initiated connection to server [127.0.0.1:2181]

2016-12-12 17:10:44,923:1(0x7ff497fff700):ZOO_INFO@check_events@1775: session establishment complete on server [127.0.0.1:2181], sessionId=0x158f400557d0003, negotiated timeout=10000

2016-12-12 17:10:44,923:1(0x7ff4977fe700):ZOO_INFO@check_events@1728: initiated connection to server [127.0.0.1:2181]

2016-12-12 17:10:44,924:1(0x7ff4977fe700):ZOO_INFO@check_events@1775: session establishment complete on server [127.0.0.1:2181], sessionId=0x158f400557d0002, negotiated timeout=10000

I1212 17:10:44.924991 8 group.cpp:340] Group process (zookeeper-group(2)@127.0.0.1:5050) connected to ZooKeeper

I1212 17:10:44.925303 8 group.cpp:828] Syncing group operations: queue size (joins, cancels, datas) = (0, 0, 0)

I1212 17:10:44.925424 8 group.cpp:418] Trying to create path '/mesos' in ZooKeeper

I1212 17:10:44.925204 5 group.cpp:340] Group process (zookeeper-group(1)@127.0.0.1:5050) connected to ZooKeeper

I1212 17:10:44.925606 5 group.cpp:828] Syncing group operations: queue size (joins, cancels, datas) = (0, 0, 0)

I1212 17:10:44.925617 5 group.cpp:418] Trying to create path '/mesos' in ZooKeeper

I1212 17:10:44.931725 8 contender.cpp:152] Joining the ZK group

I1212 17:10:44.932301 11 master.cpp:1951] Successfully attached file '/var/log/mesos/mesos-master.INFO'

I1212 17:10:44.936324 9 contender.cpp:268] New candidate (id='1') has entered the contest for leadership

I1212 17:10:44.937194 5 detector.cpp:152] Detected a new leader: (id='1')

I1212 17:10:44.937408 5 group.cpp:697] Trying to get '/mesos/json.info_0000000001' in ZooKeeper

I1212 17:10:44.939241 5 zookeeper.cpp:259] A new leading master (UPID=master@127.0.0.1:5050) is detected

I1212 17:10:44.939414 5 master.cpp:2017] Elected as the leading master!

I1212 17:10:44.939437 5 master.cpp:1560] Recovering from registrar

I1212 17:10:44.941402 5 registrar.cpp:362] Successfully fetched the registry (0B) in 1.7152ms

I1212 17:10:44.941573 5 registrar.cpp:461] Applied 1 operations in 5462ns; attempting to update the registry

I1212 17:10:44.946907 5 registrar.cpp:506] Successfully updated the registry in 5.135104ms

I1212 17:10:44.947170 5 registrar.cpp:392] Successfully recovered registrar

I1212 17:10:44.947314 5 master.cpp:1676] Recovered 0 agents from the registry (184B); allowing 10mins for agents to re-register

2016-12-12 17:11:11,640:1(0x7ff497fff700):ZOO_WARN@zookeeper_interest@1570: Exceeded deadline by 14ms

2016-12-12 17:11:11,641:1(0x7ff4977fe700):ZOO_WARN@zookeeper_interest@1570: Exceeded deadline by 15ms

2016-12-12 17:11:35,045:1(0x7ff497fff700):ZOO_WARN@zookeeper_interest@1570: Exceeded deadline by 50ms

2016-12-12 17:11:35,046:1(0x7ff4977fe700):ZOO_WARN@zookeeper_interest@1570: Exceeded deadline by 51ms

Step 3.3: Run Slave interactively in a Container

Here again, we are following the documentation of the images, but we are starting the Mesos slave in interactive mode (-it) in a third terminal in order to see all log, and we use the latest version 1.1.0 instead of 0.28.0:

(dockerhost)$ sudo docker run -it --net=host --privileged \

-e MESOS_PORT=5051 \

-e MESOS_MASTER=zk://127.0.0.1:2181/mesos \

-e MESOS_SWITCH_USER=0 \

-e MESOS_CONTAINERIZERS=docker,mesos \

-e MESOS_LOG_DIR=/var/log/mesos \

-e MESOS_WORK_DIR=/var/tmp/mesos \

-v "$(pwd)/log/mesos:/var/log/mesos" \

-v "$(pwd)/tmp/mesos:/var/tmp/mesos" \

-v /var/run/docker.sock:/var/run/docker.sock \

-v /cgroup:/cgroup \

-v /sys:/sys \

-v /usr/local/bin/docker:/usr/local/bin/docker \

mesosphere/mesos-slave:1.1.01.1.0-2.0.107.ubuntu1404

WARNING: Logging before InitGoogleLogging() is written to STDERR

I1212 17:18:39.260704 1 main.cpp:243] Build: 2016-11-16 01:30:23 by ubuntu

I1212 17:18:39.261031 1 main.cpp:244] Version: 1.1.0

I1212 17:18:39.261075 1 main.cpp:247] Git tag: 1.1.0

I1212 17:18:39.261108 1 main.cpp:251] Git SHA: a44b077ea0df54b77f05550979e1e97f39b15873

I1212 17:18:39.265000 1 logging.cpp:194] INFO level logging started!

I1212 17:18:39.400902 1 containerizer.cpp:200] Using isolation: posix/cpu,posix/mem,filesystem/posix,network/cni

I1212 17:18:39.429229 1 linux_launcher.cpp:150] Using /sys/fs/cgroup/freezer as the freezer hierarchy for the Linux launcher

2016-12-12 17:18:39,438:1(0x7ff8f7601700):ZOO_INFO@log_env@726: Client environment:zookeeper.version=zookeeper C client 3.4.8

2016-12-12 17:18:39,439:1(0x7ff8f7601700):ZOO_INFO@log_env@730: Client environment:host.name=openshift-installer

2016-12-12 17:18:39,439:1(0x7ff8f7601700):ZOO_INFO@log_env@737: Client environment:os.name=Linux

2016-12-12 17:18:39,439:1(0x7ff8f7601700):ZOO_INFO@log_env@738: Client environment:os.arch=4.2.0-42-generic

2016-12-12 17:18:39,439:1(0x7ff8f7601700):ZOO_INFO@log_env@739: Client environment:os.version=#49~14.04.1-Ubuntu SMP Wed Jun 29 20:22:11 UTC 2016

I1212 17:18:39.438886 1 slave.cpp:208] Mesos agent started on (1)@127.0.0.1:5051

2016-12-12 17:18:39,439:1(0x7ff8f7601700):ZOO_INFO@log_env@747: Client environment:user.name=(null)

I1212 17:18:39.439324 1 slave.cpp:209] Flags at startup: --appc_simple_discovery_uri_prefix="http://" --appc_store_dir="/tmp/mesos/store/appc" --authenticate_http_readonly="false" --authenticate_http_readwrite="false" --authenticatee="crammd5" --authentication_backoff_factor="1secs" --authorizer="local" --cgroups_cpu_enable_pids_and_tids_count="false" --cgroups_enable_cfs="false" --cgroups_hierarchy="/sys/fs/cgroup" --cgroups_limit_swap="false" --cgroups_root="mesos" --container_disk_watch_interval="15secs" --containerizers="docker,mesos" --default_role="*" --disk_watch_interval="1mins" --docker="docker" --docker_kill_orphans="true" --docker_registry="https://registry-1.docker.io" --docker_remove_delay="6hrs" --docker_socket="/var/run/docker.sock" --docker_stop_timeout="0ns" --docker_store_dir="/tmp/mesos/store/docker" --docker_volume_checkpoint_dir="/var/run/mesos/isolators/docker/volume" --enforce_container_disk_quota="false" --executor_registration_timeout="1mins" --executor_shutdown_grace_period="5secs" --fetcher_cache_dir="/tmp/mesos/fetch" --fetcher_cache_size="2GB" --frameworks_home="" --gc_delay="1weeks" --gc_disk_headroom="0.1" --hadoop_home="" --help="false" --hostname_lookup="true" --http_authenticators="basic" --http_command_executor="false" --image_provisioner_backend="copy" --initialize_driver_logging="true" --isolation="posix/cpu,posix/mem" --launcher="linux" --launcher_dir="/usr/libexec/mesos" --log_dir="/var/log/mesos" --logbufsecs="0" --logging_level="INFO" --master="zk://127.0.0.1:2181/mesos" --max_completed_executors_per_framework="150" --oversubscribed_resources_interval="15secs" --perf_duration="10secs" --perf_interval="1mins" --port="5051" --qos_correction_interval_min="0ns" --quiet="false" --recover="reconnect" --recovery_timeout="15mins" --registration_backoff_factor="1secs" --revocable_cpu_low_priority="true" --runtime_dir="/var/run/mesos" --sandbox_directory="/mnt/mesos/sandbox" --strict="true" --switch_user="false" --systemd_enable_support="true" --systemd_runtime_directory="/run/systemd/system" --version="false" --work_dir="/var/tmp/mesos"

2016-12-12 17:18:39,441:1(0x7ff8f7601700):ZOO_INFO@log_env@755: Client environment:user.home=/root

2016-12-12 17:18:39,442:1(0x7ff8f7601700):ZOO_INFO@log_env@767: Client environment:user.dir=/

2016-12-12 17:18:39,442:1(0x7ff8f7601700):ZOO_INFO@zookeeper_init@800: Initiating client connection, host=127.0.0.1:2181 sessionTimeout=10000 watcher=0x7ff9023a0200 sessionId=0 sessionPasswd=<null> context=0x7ff8d4001e00 flags=0

2016-12-12 17:18:39,445:1(0x7ff8f4dfc700):ZOO_INFO@check_events@1728: initiated connection to server [127.0.0.1:2181]

2016-12-12 17:18:39,446:1(0x7ff8f4dfc700):ZOO_INFO@check_events@1775: session establishment complete on server [127.0.0.1:2181], sessionId=0x158f400557d0004, negotiated timeout=10000

W1212 17:18:39.440688 1 slave.cpp:212]

**************************************************

Agent bound to loopback interface! Cannot communicate with remote master(s). You might want to set '--ip' flag to a routable IP address.

**************************************************

I1212 17:18:39.448009 8 group.cpp:340] Group process (zookeeper-group(1)@127.0.0.1:5051) connected to ZooKeeper

I1212 17:18:39.448274 8 group.cpp:828] Syncing group operations: queue size (joins, cancels, datas) = (0, 0, 0)

I1212 17:18:39.448418 8 group.cpp:418] Trying to create path '/mesos' in ZooKeeper

I1212 17:18:39.450460 1 slave.cpp:533] Agent resources: cpus(*):2; mem(*):2928; disk(*):1902607; ports(*):[31000-32000]

I1212 17:18:39.452038 8 detector.cpp:152] Detected a new leader: (id='1')

I1212 17:18:39.452385 8 group.cpp:697] Trying to get '/mesos/json.info_0000000001' in ZooKeeper

I1212 17:18:39.452198 1 slave.cpp:541] Agent attributes: [ ]

I1212 17:18:39.454398 1 slave.cpp:546] Agent hostname: openshift-installer-native-docker-compose

I1212 17:18:39.459702 8 zookeeper.cpp:259] A new leading master (UPID=master@127.0.0.1:5050) is detected

I1212 17:18:39.462762 9 state.cpp:57] Recovering state from '/var/tmp/mesos/meta'

I1212 17:18:39.464331 9 status_update_manager.cpp:203] Recovering status update manager

I1212 17:18:39.465109 9 docker.cpp:764] Recovering Docker containers

I1212 17:18:39.465148 11 containerizer.cpp:555] Recovering containerizer

I1212 17:18:39.472216 6 provisioner.cpp:253] Provisioner recovery complete

I1212 17:18:39.917292 8 slave.cpp:5281] Finished recovery

I1212 17:18:39.938516 8 slave.cpp:915] New master detected at master@127.0.0.1:5050

I1212 17:18:39.939077 8 slave.cpp:936] No credentials provided. Attempting to register without authentication

I1212 17:18:39.939728 8 slave.cpp:947] Detecting new master

I1212 17:18:39.938575 9 status_update_manager.cpp:177] Pausing sending status updates

E1212 17:18:40.622269 8 process.cpp:2154] Failed to shutdown socket with fd 12: Transport endpoint is not connected

I1212 17:18:40.631978 8 slave.cpp:4179] Got exited event for master@127.0.0.1:5050

W1212 17:18:40.634527 8 slave.cpp:4184] Master disconnected! Waiting for a new master to be elected

E1212 17:18:40.632072 13 process.cpp:2154] Failed to shutdown socket with fd 12: Transport endpoint is not connected

E1212 17:18:42.052165 13 process.cpp:2154] Failed to shutdown socket with fd 12: Transport endpoint is not connected

I1212 17:18:42.052312 7 slave.cpp:4179] Got exited event for master@127.0.0.1:5050

W1212 17:18:42.057737 7 slave.cpp:4184] Master disconnected! Waiting for a new master to be elected

I1212 17:18:45.093793 6 slave.cpp:4179] Got exited event for master@127.0.0.1:5050

W1212 17:18:45.094339 6 slave.cpp:4184] Master disconnected! Waiting for a new master to be elected

E1212 17:18:45.093952 13 process.cpp:2154] Failed to shutdown socket with fd 12: Transport endpoint is not connected

I1212 17:18:48.748410 9 slave.cpp:4179] Got exited event for master@127.0.0.1:5050

W1212 17:18:48.748883 9 slave.cpp:4184] Master disconnected! Waiting for a new master to be elected

E1212 17:18:48.748569 13 process.cpp:2154] Failed to shutdown socket with fd 12: Transport endpoint is not connected

2016-12-12 17:18:49,470:1(0x7ff8f4dfc700):ZOO_WARN@zookeeper_interest@1570: Exceeded deadline by 12ms

I1212 17:18:50.030248 8 detector.cpp:152] Detected a new leader: None

I1212 17:18:50.030894 8 slave.cpp:908] Lost leading master

I1212 17:18:50.031404 8 slave.cpp:947] Detecting new master

I1212 17:18:50.031327 7 status_update_manager.cpp:177] Pausing sending status updates

2016-12-12 17:18:53,405:1(0x7ff8f4dfc700):ZOO_WARN@zookeeper_interest@1570: Exceeded deadline by 43ms

I1212 17:19:02.637687 7 slave.cpp:1324] Skipping registration because no master present

I1212 17:19:22.959288 8 detector.cpp:152] Detected a new leader: (id='2')

I1212 17:19:22.961132 8 group.cpp:697] Trying to get '/mesos/json.info_0000000002' in ZooKeeper

I1212 17:19:22.965332 8 zookeeper.cpp:259] A new leading master (UPID=master@127.0.0.1:5050) is detected

I1212 17:19:22.971281 8 slave.cpp:915] New master detected at master@127.0.0.1:5050

I1212 17:19:22.972019 8 slave.cpp:936] No credentials provided. Attempting to register without authentication

I1212 17:19:22.975116 8 slave.cpp:947] Detecting new master

I1212 17:19:22.971410 9 status_update_manager.cpp:177] Pausing sending status updates

I1212 17:19:23.927111 11 slave.cpp:1115] Registered with master master@127.0.0.1:5050; given agent ID a06268a0-4cfd-4d76-94c7-fbdf053be0ba-S0

I1212 17:19:23.931748 12 status_update_manager.cpp:184] Resuming sending status updates

I1212 17:19:24.781162 11 slave.cpp:1175] Forwarding total oversubscribed resources {}

I1212 17:19:39.456789 10 slave.cpp:5044] Current disk usage 62.36%. Max allowed age: 1.934608332272824days

2016-12-12 17:19:46,345:1(0x7ff8f4dfc700):ZOO_WARN@zookeeper_interest@1570: Exceeded deadline by 20ms

2016-12-12 17:20:19,750:1(0x7ff8f4dfc700):ZOO_WARN@zookeeper_interest@1570: Exceeded deadline by 41ms

Step 4: Connect to Mesos Web Portal

Mesos’ web portal is running on port 5050 per default. In my case, I am running Mesos on Docker containers, which are hosted by a VirtualBox Docker host VM. Since the VM only has a NATed virtual Ethernet interface, I need to specify a port forwarding rule in VirtualBox, so I can reach port 5050 of the Docker host:

![2016-12-12-18_41_57-regel-fur-port-weiterleitung]()

After that, we can access the Mesos Master Portal from a Browser on the base system:

![2016-12-12-18_48_01-mesos]()

Note: if you see the error “Failed to connect to 127.0.0.1:5050!”, try reloading (instead of retrying) the page via the browser reload function. See Appendix A.1 for details.

We can see the single Mesos slave (i.e. agent) connected to the master on the left:

![2016-12-12-19_02_19-mesos]()

The details of the agents (slaves) can be seen by clicking on the Agents link in the menu:

![2016-12-16-20_34_44-mesos]()

So, what is next? It looks like we need a framework like Marathon in order to start applications on Mesos. Let us start Marathon now.

Step 5: Start Marathon

Let us start Marathon in a container as described here. However, we will use port 7070 instead of 8080, because 8080 collides with a port that is used by the ZooKeeper. In addition, we will change the master URI and let it point to the external Mesos master we have started in step 3. Moreover, we need to set the environment variable MESOS_WORK_DIR because of a Mesos bug. See Appendix B for details.

(dockerhost)$ sudo docker run -it --name marathon --rm --net=host -e MESOS_WORK_DIR=/var/lib/mesos --entrypoint=bash mesosphere/marathon

(container)# ./bin/start --master zk://127.0.0.1:2181/mesos --zk zk://localhost:2181/marathon --http_port=7070

MESOS_NATIVE_JAVA_LIBRARY is not set. Searching in /usr/lib /usr/local/lib.

MESOS_NATIVE_LIBRARY, MESOS_NATIVE_JAVA_LIBRARY set to '/usr/lib/libmesos.so'

No start hook file found ($HOOK_MARATHON_START). Proceeding with the start script.

[2016-12-13 14:46:09,798] INFO Starting Marathon 1.3.6/unknown with --master zk://127.0.0.1:2181/mesos --zk zk://localhost:2181/marathon --http_port=7070 (mesosphere.marathon.Main$:main)

[2016-12-13 14:46:10,148] WARN Method [public javax.ws.rs.core.Response mesosphere.marathon.api.MarathonExceptionMapper.toResponse(java.lang.Throwable)] is synthetic and is being intercepted by [mesosphere.marathon.DebugModule$MetricsBehavior@985696]. This could indicate a bug. The method may be intercepted twice, or may not be intercepted at all. (com.google.inject.internal.ProxyFactory:main)

[2016-12-13 14:46:10,336] INFO Logging initialized @1841ms (org.eclipse.jetty.util.log:main)

[2016-12-13 14:46:10,849] INFO Slf4jLogger started (akka.event.slf4j.Slf4jLogger:marathon-akka.actor.default-dispatcher-2)

[2016-12-13 14:46:11,060] INFO Started TaskTrackerUpdateStepsProcessorImpl with steps:

* continueOnError(notifyHealthCheckManager)

* continueOnError(notifyRateLimiter)

* continueOnError(notifyLaunchQueue)

* continueOnError(emitUpdate)

* continueOnError(postTaskStatusEvent)

* continueOnError(scaleApp) (mesosphere.marathon.core.task.tracker.impl.TaskTrackerUpdateStepProcessorImpl:main)

[2016-12-13 14:46:11,128] INFO Calling reviveOffers is enabled. Use --disable_revive_offers_for_new_apps to disable. (mesosphere.marathon.core.flow.FlowModule:main)

[2016-12-13 14:46:11,195] INFO Loading plugins implementing 'mesosphere.marathon.plugin.auth.Authenticator' from these urls: [] (mesosphere.marathon.core.plugin.impl.PluginManagerImpl:main)

[2016-12-13 14:46:11,200] INFO Found 0 plugins. (mesosphere.marathon.core.plugin.impl.PluginManagerImpl:main)

[2016-12-13 14:46:11,202] INFO Loading plugins implementing 'mesosphere.marathon.plugin.auth.Authorizer' from these urls: [] (mesosphere.marathon.core.plugin.impl.PluginManagerImpl:main)

[2016-12-13 14:46:11,203] INFO Found 0 plugins. (mesosphere.marathon.core.plugin.impl.PluginManagerImpl:main)

[2016-12-13 14:46:11,205] INFO Started status update processor (mesosphere.marathon.core.task.update.impl.TaskStatusUpdateProcessorImpl$$EnhancerByGuice$$ca7c928f:main)

[2016-12-13 14:46:11,321] INFO All actors suspended:

* Actor[akka://marathon/user/groupManager#1830533307]

* Actor[akka://marathon/user/launchQueue#-485770292]

* Actor[akka://marathon/user/killOverdueStagedTasks#540935184]

* Actor[akka://marathon/user/offersWantedForReconciliation#-876030651]

* Actor[akka://marathon/user/taskKillServiceActor#1912899513]

* Actor[akka://marathon/user/rateLimiter#1490654495]

* Actor[akka://marathon/user/offerMatcherLaunchTokens#-1930600924]

* Actor[akka://marathon/user/expungeOverdueLostTasks#-405721147]

* Actor[akka://marathon/user/reviveOffersWhenWanted#1467955826]

* Actor[akka://marathon/user/taskTracker#623538008]

* Actor[akka://marathon/user/offerMatcherManager#1760835888] (mesosphere.marathon.core.leadership.impl.LeadershipCoordinatorActor:marathon-akka.actor.default-dispatcher-2)

[2016-12-13 14:46:11,417] INFO Adding HTTP support. (mesosphere.chaos.http.HttpModule:main)

[2016-12-13 14:46:11,418] INFO No HTTPS support configured. (mesosphere.chaos.http.HttpModule:main)

[2016-12-13 14:46:11,497] INFO Starting up (mesosphere.marathon.MarathonSchedulerService$$EnhancerByGuice$$4bb98838:MarathonSchedulerService$$EnhancerByGuice$$4bb98838)

[2016-12-13 14:46:11,497] INFO Beginning run (mesosphere.marathon.MarathonSchedulerService$$EnhancerByGuice$$4bb98838:MarathonSchedulerService$$EnhancerByGuice$$4bb98838)

[2016-12-13 14:46:11,498] INFO Will offer leadership after 500 milliseconds backoff (mesosphere.marathon.core.election.impl.CuratorElectionService:MarathonSchedulerService$$EnhancerByGuice$$4bb98838)

[2016-12-13 14:46:11,504] INFO jetty-9.3.z-SNAPSHOT (org.eclipse.jetty.server.Server:HttpService$$EnhancerByGuice$$1fd57a30 STARTING)

[2016-12-13 14:46:11,535] INFO Now standing by. Closing existing handles and rejecting new. (mesosphere.marathon.core.event.impl.stream.HttpEventStreamActor:marathon-akka.actor.default-dispatcher-8)

[2016-12-13 14:46:11,704] INFO Registering com.codahale.metrics.jersey.InstrumentedResourceMethodDispatchAdapter as a provider class (com.sun.jersey.guice.spi.container.GuiceComponentProviderFactory:HttpService$$EnhancerByGuice$$1fd57a30 STARTING)

[2016-12-13 14:46:11,705] INFO Registering mesosphere.marathon.api.MarathonExceptionMapper as a provider class (com.sun.jersey.guice.spi.container.GuiceComponentProviderFactory:HttpService$$EnhancerByGuice$$1fd57a30 STARTING)

[2016-12-13 14:46:11,706] INFO Registering mesosphere.marathon.api.v2.AppsResource as a root resource class (com.sun.jersey.guice.spi.container.GuiceComponentProviderFactory:HttpService$$EnhancerByGuice$$1fd57a30 STARTING)

[2016-12-13 14:46:11,706] INFO Registering mesosphere.marathon.api.v2.TasksResource as a root resource class (com.sun.jersey.guice.spi.container.GuiceComponentProviderFactory:HttpService$$EnhancerByGuice$$1fd57a30 STARTING)

[2016-12-13 14:46:11,706] INFO Registering mesosphere.marathon.api.v2.EventSubscriptionsResource as a root resource class (com.sun.jersey.guice.spi.container.GuiceComponentProviderFactory:HttpService$$EnhancerByGuice$$1fd57a30 STARTING)

[2016-12-13 14:46:11,706] INFO Registering mesosphere.marathon.api.v2.QueueResource as a root resource class (com.sun.jersey.guice.spi.container.GuiceComponentProviderFactory:HttpService$$EnhancerByGuice$$1fd57a30 STARTING)

[2016-12-13 14:46:11,706] INFO Registering mesosphere.marathon.api.v2.GroupsResource as a root resource class (com.sun.jersey.guice.spi.container.GuiceComponentProviderFactory:HttpService$$EnhancerByGuice$$1fd57a30 STARTING)

[2016-12-13 14:46:11,706] INFO Registering mesosphere.marathon.api.v2.InfoResource as a root resource class (com.sun.jersey.guice.spi.container.GuiceComponentProviderFactory:HttpService$$EnhancerByGuice$$1fd57a30 STARTING)

[2016-12-13 14:46:11,706] INFO Registering mesosphere.marathon.api.v2.LeaderResource as a root resource class (com.sun.jersey.guice.spi.container.GuiceComponentProviderFactory:HttpService$$EnhancerByGuice$$1fd57a30 STARTING)

[2016-12-13 14:46:11,706] INFO Registering mesosphere.marathon.api.v2.DeploymentsResource as a root resource class (com.sun.jersey.guice.spi.container.GuiceComponentProviderFactory:HttpService$$EnhancerByGuice$$1fd57a30 STARTING)

[2016-12-13 14:46:11,706] INFO Registering mesosphere.marathon.api.v2.ArtifactsResource as a root resource class (com.sun.jersey.guice.spi.container.GuiceComponentProviderFactory:HttpService$$EnhancerByGuice$$1fd57a30 STARTING)

[2016-12-13 14:46:11,706] INFO Registering mesosphere.marathon.api.v2.SchemaResource as a root resource class (com.sun.jersey.guice.spi.container.GuiceComponentProviderFactory:HttpService$$EnhancerByGuice$$1fd57a30 STARTING)

[2016-12-13 14:46:11,706] INFO Registering mesosphere.marathon.api.v2.PluginsResource as a root resource class (com.sun.jersey.guice.spi.container.GuiceComponentProviderFactory:HttpService$$EnhancerByGuice$$1fd57a30 STARTING)

[2016-12-13 14:46:11,710] INFO Initiating Jersey application, version 'Jersey: 1.18.1 02/19/2014 03:28 AM' (com.sun.jersey.server.impl.application.WebApplicationImpl:HttpService$$EnhancerByGuice$$1fd57a30 STARTING)

[2016-12-13 14:46:11,765] INFO Binding com.codahale.metrics.jersey.InstrumentedResourceMethodDispatchAdapter to GuiceManagedComponentProvider with the scope "Singleton" (com.sun.jersey.guice.spi.container.GuiceComponentProviderFactory:HttpService$$EnhancerByGuice$$1fd57a30 STARTING)

[2016-12-13 14:46:11,782] INFO Binding mesosphere.marathon.api.MarathonExceptionMapper to GuiceManagedComponentProvider with the scope "Singleton" (com.sun.jersey.guice.spi.container.GuiceComponentProviderFactory:HttpService$$EnhancerByGuice$$1fd57a30 STARTING)

[2016-12-13 14:46:12,027] INFO Using HA and therefore offering leadership (mesosphere.marathon.core.election.impl.CuratorElectionService:ForkJoinPool-2-worker-13)

[2016-12-13 14:46:12,028] INFO Will do leader election through localhost:2181 (mesosphere.marathon.core.election.impl.CuratorElectionService:ForkJoinPool-2-worker-13)

[2016-12-13 14:46:12,073] WARN session timeout [10000] is less than connection timeout [15000] (org.apache.curator.CuratorZookeeperClient:ForkJoinPool-2-worker-13)

[2016-12-13 14:46:12,096] INFO Starting (org.apache.curator.framework.imps.CuratorFrameworkImpl:ForkJoinPool-2-worker-13)

[2016-12-13 14:46:12,124] INFO Client environment:zookeeper.version=3.5.0-alpha-1615249, built on 08/01/2014 22:13 GMT (org.apache.zookeeper.ZooKeeper:ForkJoinPool-2-worker-13)

[2016-12-13 14:46:12,124] INFO Client environment:host.name=openshift-installer-native-docker-compose (org.apache.zookeeper.ZooKeeper:ForkJoinPool-2-worker-13)

[2016-12-13 14:46:12,124] INFO Client environment:java.version=1.8.0_102 (org.apache.zookeeper.ZooKeeper:ForkJoinPool-2-worker-13)

[2016-12-13 14:46:12,124] INFO Client environment:java.vendor=Oracle Corporation (org.apache.zookeeper.ZooKeeper:ForkJoinPool-2-worker-13)

[2016-12-13 14:46:12,124] INFO Client environment:java.home=/usr/lib/jvm/java-8-openjdk-amd64/jre (org.apache.zookeeper.ZooKeeper:ForkJoinPool-2-worker-13)

[2016-12-13 14:46:12,124] INFO Client environment:java.class.path=./bin/../target/marathon-assembly-1.3.6.jar (org.apache.zookeeper.ZooKeeper:ForkJoinPool-2-worker-13)

[2016-12-13 14:46:12,124] INFO Client environment:java.library.path=/usr/java/packages/lib/amd64:/usr/lib/x86_64-linux-gnu/jni:/lib/x86_64-linux-gnu:/usr/lib/x86_64-linux-gnu:/usr/lib/jni:/lib:/usr/lib (org.apache.zookeeper.ZooKeeper:ForkJoinPool-2-worker-13)

[2016-12-13 14:46:12,124] INFO Client environment:java.io.tmpdir=/tmp (org.apache.zookeeper.ZooKeeper:ForkJoinPool-2-worker-13)

[2016-12-13 14:46:12,124] INFO Client environment:java.compiler=<NA> (org.apache.zookeeper.ZooKeeper:ForkJoinPool-2-worker-13)

[2016-12-13 14:46:12,124] INFO Client environment:os.name=Linux (org.apache.zookeeper.ZooKeeper:ForkJoinPool-2-worker-13)

[2016-12-13 14:46:12,124] INFO Client environment:os.arch=amd64 (org.apache.zookeeper.ZooKeeper:ForkJoinPool-2-worker-13)

[2016-12-13 14:46:12,124] INFO Client environment:os.version=4.2.0-42-generic (org.apache.zookeeper.ZooKeeper:ForkJoinPool-2-worker-13)

[2016-12-13 14:46:12,124] INFO Client environment:user.name=root (org.apache.zookeeper.ZooKeeper:ForkJoinPool-2-worker-13)

[2016-12-13 14:46:12,124] INFO Client environment:user.home=/root (org.apache.zookeeper.ZooKeeper:ForkJoinPool-2-worker-13)

[2016-12-13 14:46:12,124] INFO Client environment:user.dir=/marathon (org.apache.zookeeper.ZooKeeper:ForkJoinPool-2-worker-13)

[2016-12-13 14:46:12,124] INFO Client environment:os.memory.free=110MB (org.apache.zookeeper.ZooKeeper:ForkJoinPool-2-worker-13)

[2016-12-13 14:46:12,124] INFO Client environment:os.memory.max=880MB (org.apache.zookeeper.ZooKeeper:ForkJoinPool-2-worker-13)

[2016-12-13 14:46:12,124] INFO Client environment:os.memory.total=158MB (org.apache.zookeeper.ZooKeeper:ForkJoinPool-2-worker-13)

[2016-12-13 14:46:12,124] INFO Initiating client connection, connectString=localhost:2181 sessionTimeout=10000 watcher=org.apache.curator.ConnectionState@3411e275 (org.apache.zookeeper.ZooKeeper:ForkJoinPool-2-worker-13)

[2016-12-13 14:46:12,194] INFO Opening socket connection to server localhost/0:0:0:0:0:0:0:1:2181. Will not attempt to authenticate using SASL (unknown error) (org.apache.zookeeper.ClientCnxn:ForkJoinPool-2-worker-13-SendThread(localhost:2181))

[2016-12-13 14:46:12,201] INFO Socket connection established to localhost/0:0:0:0:0:0:0:1:2181, initiating session (org.apache.zookeeper.ClientCnxn:ForkJoinPool-2-worker-13-SendThread(localhost:2181))

[2016-12-13 14:46:12,212] INFO Session establishment complete on server localhost/0:0:0:0:0:0:0:1:2181, sessionid = 0x158f4c9e1f90014, negotiated timeout = 10000 (org.apache.zookeeper.ClientCnxn:ForkJoinPool-2-worker-13-SendThread(localhost:2181))

[2016-12-13 14:46:12,217] INFO State change: CONNECTED (org.apache.curator.framework.state.ConnectionStateManager:ForkJoinPool-2-worker-13-EventThread)

[2016-12-13 14:46:12,279] INFO Elected (LeaderLatchListener Interface) (mesosphere.marathon.core.election.impl.CuratorElectionService:pool-1-thread-1)

[2016-12-13 14:46:12,284] INFO As new leader running the driver (mesosphere.marathon.MarathonSchedulerService$$EnhancerByGuice$$4bb98838:pool-1-thread-1)

[2016-12-13 14:46:12,302] INFO Initiating client connection, connectString=localhost:2181 sessionTimeout=10000 watcher=com.twitter.zk.EventBroker@5301f666 (org.apache.zookeeper.ZooKeeper:pool-1-thread-1)

[2016-12-13 14:46:12,310] INFO Opening socket connection to server localhost/127.0.0.1:2181. Will not attempt to authenticate using SASL (unknown error) (org.apache.zookeeper.ClientCnxn:pool-1-thread-1-SendThread(localhost:2181))

[2016-12-13 14:46:12,310] INFO Socket connection established to localhost/127.0.0.1:2181, initiating session (org.apache.zookeeper.ClientCnxn:pool-1-thread-1-SendThread(localhost:2181))

[2016-12-13 14:46:12,323] INFO Session establishment complete on server localhost/127.0.0.1:2181, sessionid = 0x158f4c9e1f90015, negotiated timeout = 10000 (org.apache.zookeeper.ClientCnxn:pool-1-thread-1-SendThread(localhost:2181))

[2016-12-13 14:46:12,367] INFO Binding mesosphere.marathon.api.v2.AppsResource to GuiceManagedComponentProvider with the scope "Singleton" (com.sun.jersey.guice.spi.container.GuiceComponentProviderFactory:HttpService$$EnhancerByGuice$$1fd57a30 STARTING)

[2016-12-13 14:46:12,391] INFO Binding mesosphere.marathon.api.v2.TasksResource to GuiceManagedComponentProvider with the scope "Singleton" (com.sun.jersey.guice.spi.container.GuiceComponentProviderFactory:HttpService$$EnhancerByGuice$$1fd57a30 STARTING)

[2016-12-13 14:46:12,398] INFO Binding mesosphere.marathon.api.v2.EventSubscriptionsResource to GuiceManagedComponentProvider with the scope "Singleton" (com.sun.jersey.guice.spi.container.GuiceComponentProviderFactory:HttpService$$EnhancerByGuice$$1fd57a30 STARTING)

[2016-12-13 14:46:12,398] INFO Event notification disabled. (mesosphere.marathon.core.event.EventModule:HttpService$$EnhancerByGuice$$1fd57a30 STARTING)

[2016-12-13 14:46:12,404] INFO Binding mesosphere.marathon.api.v2.QueueResource to GuiceManagedComponentProvider with the scope "Singleton" (com.sun.jersey.guice.spi.container.GuiceComponentProviderFactory:HttpService$$EnhancerByGuice$$1fd57a30 STARTING)

[2016-12-13 14:46:12,425] INFO Binding mesosphere.marathon.api.v2.GroupsResource to GuiceManagedComponentProvider with the scope "Singleton" (com.sun.jersey.guice.spi.container.GuiceComponentProviderFactory:HttpService$$EnhancerByGuice$$1fd57a30 STARTING)

[2016-12-13 14:46:12,429] INFO Binding mesosphere.marathon.api.v2.InfoResource to GuiceManagedComponentProvider with the scope "Singleton" (com.sun.jersey.guice.spi.container.GuiceComponentProviderFactory:HttpService$$EnhancerByGuice$$1fd57a30 STARTING)

[2016-12-13 14:46:12,431] INFO Binding mesosphere.marathon.api.v2.LeaderResource to GuiceManagedComponentProvider with the scope "Singleton" (com.sun.jersey.guice.spi.container.GuiceComponentProviderFactory:HttpService$$EnhancerByGuice$$1fd57a30 STARTING)

[2016-12-13 14:46:12,433] INFO Binding mesosphere.marathon.api.v2.DeploymentsResource to GuiceManagedComponentProvider with the scope "Singleton" (com.sun.jersey.guice.spi.container.GuiceComponentProviderFactory:HttpService$$EnhancerByGuice$$1fd57a30 STARTING)

[2016-12-13 14:46:12,441] INFO Binding mesosphere.marathon.api.v2.ArtifactsResource to GuiceManagedComponentProvider with the scope "Singleton" (com.sun.jersey.guice.spi.container.GuiceComponentProviderFactory:HttpService$$EnhancerByGuice$$1fd57a30 STARTING)

[2016-12-13 14:46:12,443] INFO Binding mesosphere.marathon.api.v2.SchemaResource to GuiceManagedComponentProvider with the scope "Singleton" (com.sun.jersey.guice.spi.container.GuiceComponentProviderFactory:HttpService$$EnhancerByGuice$$1fd57a30 STARTING)

[2016-12-13 14:46:12,445] INFO Binding mesosphere.marathon.api.v2.PluginsResource to GuiceManagedComponentProvider with the scope "Singleton" (com.sun.jersey.guice.spi.container.GuiceComponentProviderFactory:HttpService$$EnhancerByGuice$$1fd57a30 STARTING)

[2016-12-13 14:46:12,445] INFO Loading plugins implementing 'mesosphere.marathon.plugin.http.HttpRequestHandler' from these urls: [] (mesosphere.marathon.core.plugin.impl.PluginManagerImpl:HttpService$$EnhancerByGuice$$1fd57a30 STARTING)

[2016-12-13 14:46:12,446] INFO Found 0 plugins. (mesosphere.marathon.core.plugin.impl.PluginManagerImpl:HttpService$$EnhancerByGuice$$1fd57a30 STARTING)

[2016-12-13 14:46:12,449] INFO Migration successfully applied for version Version(1, 3, 6) (mesosphere.marathon.state.Migration:ForkJoinPool-2-worker-5)

[2016-12-13 14:46:12,451] INFO Call preDriverStarts callbacks on EntityStoreCache(MarathonStore(app:)), EntityStoreCache(MarathonStore(group:)), EntityStoreCache(MarathonStore(deployment:)), EntityStoreCache(MarathonStore(framework:)), EntityStoreCache(MarathonStore(taskFailure:)), EntityStoreCache(MarathonStore(events:)) (mesosphere.marathon.MarathonSchedulerService$$EnhancerByGuice$$4bb98838:pool-1-thread-1)

[2016-12-13 14:46:12,468] INFO Finished preDriverStarts callbacks (mesosphere.marathon.MarathonSchedulerService$$EnhancerByGuice$$4bb98838:pool-1-thread-1)

[2016-12-13 14:46:12,469] INFO Started o.e.j.s.ServletContextHandler@65817241{/,null,AVAILABLE} (org.eclipse.jetty.server.handler.ContextHandler:HttpService$$EnhancerByGuice$$1fd57a30 STARTING)

[2016-12-13 14:46:12,484] INFO ExpungeOverdueLostTasksActor has started (mesosphere.marathon.core.task.jobs.impl.ExpungeOverdueLostTasksActor:marathon-akka.actor.default-dispatcher-4)

[2016-12-13 14:46:12,487] INFO TaskTrackerActor is starting. Task loading initiated. (mesosphere.marathon.core.task.tracker.impl.TaskTrackerActor:marathon-akka.actor.default-dispatcher-6)

[2016-12-13 14:46:12,489] INFO started RateLimiterActor (mesosphere.marathon.core.launchqueue.impl.RateLimiterActor:marathon-akka.actor.default-dispatcher-4)

[2016-12-13 14:46:12,492] INFO no interest in offers for reservation reconciliation anymore. (mesosphere.marathon.core.matcher.reconcile.impl.OffersWantedForReconciliationActor:marathon-akka.actor.default-dispatcher-9)

[2016-12-13 14:46:12,494] INFO Started. Will remain interested in offer reconciliation for 17500 milliseconds when needed. (mesosphere.marathon.core.matcher.reconcile.impl.OffersWantedForReconciliationActor:marathon-akka.actor.default-dispatcher-9)

[2016-12-13 14:46:12,514] INFO All actors active:

* Actor[akka://marathon/user/groupManager#1830533307]

* Actor[akka://marathon/user/launchQueue#-485770292]

* Actor[akka://marathon/user/killOverdueStagedTasks#540935184]

* Actor[akka://marathon/user/offersWantedForReconciliation#-876030651]

* Actor[akka://marathon/user/taskKillServiceActor#1912899513]

* Actor[akka://marathon/user/rateLimiter#1490654495]

* Actor[akka://marathon/user/offerMatcherLaunchTokens#-1930600924]

* Actor[akka://marathon/user/expungeOverdueLostTasks#-405721147]

* Actor[akka://marathon/user/reviveOffersWhenWanted#1467955826]

* Actor[akka://marathon/user/taskTracker#623538008]

* Actor[akka://marathon/user/offerMatcherManager#1760835888] (mesosphere.marathon.core.leadership.impl.LeadershipCoordinatorActor:marathon-akka.actor.default-dispatcher-5)

[2016-12-13 14:46:12,520] INFO Started ServerConnector@7fc3545e{HTTP/1.1,[http/1.1]}{0.0.0.0:7070} (org.eclipse.jetty.server.ServerConnector:HttpService$$EnhancerByGuice$$1fd57a30 STARTING)

[2016-12-13 14:46:12,520] INFO Started @4026ms (org.eclipse.jetty.server.Server:HttpService$$EnhancerByGuice$$1fd57a30 STARTING)

[2016-12-13 14:46:12,521] INFO All services up and running. (mesosphere.marathon.Main$:main)

[2016-12-13 14:46:12,524] INFO About to load 0 tasks (mesosphere.marathon.core.task.tracker.impl.TaskLoaderImpl:ForkJoinPool-2-worker-5)

[2016-12-13 14:46:12,536] INFO Loaded 0 tasks (mesosphere.marathon.core.task.tracker.impl.TaskLoaderImpl:ForkJoinPool-2-worker-5)

[2016-12-13 14:46:12,545] INFO Task loading complete. (mesosphere.marathon.core.task.tracker.impl.TaskTrackerActor:marathon-akka.actor.default-dispatcher-8)

[2016-12-13 14:46:12,555] INFO interested in offers for reservation reconciliation because of becoming leader (until 2016-12-13T14:46:29.995Z) (mesosphere.marathon.core.matcher.reconcile.impl.OffersWantedForReconciliationActor:marathon-akka.actor.default-dispatcher-9)

[2016-12-13 14:46:12,588] INFO Received offers WANTED notification (mesosphere.marathon.core.flow.impl.ReviveOffersActor:marathon-akka.actor.default-dispatcher-6)

[2016-12-13 14:46:12,589] INFO => revive offers NOW, canceling any scheduled revives (mesosphere.marathon.core.flow.impl.ReviveOffersActor:marathon-akka.actor.default-dispatcher-6)

[2016-12-13 14:46:12,589] INFO 2 further revives still needed. Repeating reviveOffers according to --revive_offers_repetitions 3 (mesosphere.marathon.core.flow.impl.ReviveOffersActor:marathon-akka.actor.default-dispatcher-6)

[2016-12-13 14:46:12,589] INFO => Schedule next revive at 2016-12-13T14:46:17.586Z in 4998 milliseconds, adhering to --min_revive_offers_interval 5000 (ms) (mesosphere.marathon.core.flow.impl.ReviveOffersActor:marathon-akka.actor.default-dispatcher-6)

[2016-12-13 14:46:12,638] INFO Create new Scheduler Driver with frameworkId: Some(value: "44a35e16-dc32-4f91-afac-33dfff498944-0000"

) and scheduler mesosphere.marathon.core.heartbeat.MesosHeartbeatMonitor$$EnhancerByGuice$$c730b9b8@15d42ccb (mesosphere.marathon.MarathonSchedulerDriver$:pool-1-thread-1)

WARNING: Logging before InitGoogleLogging() is written to STDERR

W1213 14:46:12.777942 192 sched.cpp:1697]

**************************************************

Scheduler driver bound to loopback interface! Cannot communicate with remote master(s). You might want to set 'LIBPROCESS_IP' environment variable to use a routable IP address.

**************************************************

I1213 14:46:12.791020 196 sched.cpp:226] Version: 1.0.1

2016-12-13 14:46:12,791:134(0x7f351b0c7700):ZOO_INFO@log_env@726: Client environment:zookeeper.version=zookeeper C client 3.4.8

2016-12-13 14:46:12,791:134(0x7f351b0c7700):ZOO_INFO@log_env@730: Client environment:host.name=openshift-installer

2016-12-13 14:46:12,792:134(0x7f351b0c7700):ZOO_INFO@log_env@737: Client environment:os.name=Linux

2016-12-13 14:46:12,792:134(0x7f351b0c7700):ZOO_INFO@log_env@738: Client environment:os.arch=4.2.0-42-generic

2016-12-13 14:46:12,792:134(0x7f351b0c7700):ZOO_INFO@log_env@739: Client environment:os.version=#49~14.04.1-Ubuntu SMP Wed Jun 29 20:22:11 UTC 2016

2016-12-13 14:46:12,792:134(0x7f351b0c7700):ZOO_INFO@log_env@747: Client environment:user.name=(null)

2016-12-13 14:46:12,792:134(0x7f351b0c7700):ZOO_INFO@log_env@755: Client environment:user.home=/root

2016-12-13 14:46:12,792:134(0x7f351b0c7700):ZOO_INFO@log_env@767: Client environment:user.dir=/marathon

2016-12-13 14:46:12,792:134(0x7f351b0c7700):ZOO_INFO@zookeeper_init@800: Initiating client connection, host=127.0.0.1:2181 sessionTimeout=10000 watcher=0x7f3522fb1d80 sessionId=0 sessionPasswd=<null> context=0x7f352403b5c0 flags=0

[2016-12-13 14:46:12,799] INFO Reset offerLeadership backoff (mesosphere.marathon.core.election.impl.ExponentialBackoff:pool-1-thread-1)

[2016-12-13 14:46:12,799] INFO Became active. Accepting event streaming requests. (mesosphere.marathon.core.event.impl.stream.HttpEventStreamActor:marathon-akka.actor.default-dispatcher-6)

[2016-12-13 14:46:12,800] INFO Starting scheduler actor (mesosphere.marathon.MarathonSchedulerActor:marathon-akka.actor.default-dispatcher-8)

2016-12-13 14:46:12,803:134(0x7f35198c4700):ZOO_INFO@check_events@1728: initiated connection to server [127.0.0.1:2181]

[2016-12-13 14:46:12,811] INFO Scheduler actor ready (mesosphere.marathon.MarathonSchedulerActor:marathon-akka.actor.default-dispatcher-6)

2016-12-13 14:46:12,826:134(0x7f35198c4700):ZOO_INFO@check_events@1775: session establishment complete on server [127.0.0.1:2181], sessionId=0x158f4c9e1f90016, negotiated timeout=10000

I1213 14:46:12.828322 205 group.cpp:349] Group process (group(1)@127.0.0.1:35047) connected to ZooKeeper

I1213 14:46:12.829164 205 group.cpp:837] Syncing group operations: queue size (joins, cancels, datas) = (0, 0, 0)

I1213 14:46:12.829583 205 group.cpp:427] Trying to create path '/mesos' in ZooKeeper

I1213 14:46:12.833498 205 detector.cpp:152] Detected a new leader: (id='1')

I1213 14:46:12.834187 205 group.cpp:706] Trying to get '/mesos/json.info_0000000001' in ZooKeeper

I1213 14:46:12.836730 205 zookeeper.cpp:259] A new leading master (UPID=master@127.0.0.1:5050) is detected

I1213 14:46:12.837736 205 sched.cpp:330] New master detected at master@127.0.0.1:5050

I1213 14:46:12.838312 205 sched.cpp:341] No credentials provided. Attempting to register without authentication

I1213 14:46:12.843353 205 sched.cpp:743] Framework registered with 44a35e16-dc32-4f91-afac-33dfff498944-0000

[2016-12-13 14:46:12,846] INFO Creating tombstone for old twitter commons leader election (mesosphere.marathon.core.election.impl.CuratorElectionService:pool-1-thread-1)

[2016-12-13 14:46:12,872] INFO Registered as 44a35e16-dc32-4f91-afac-33dfff498944-0000 to master '03f81417-51c9-4055-adbe-c1fb74fc8ab4' (mesosphere.marathon.MarathonScheduler$$EnhancerByGuice$$1ef061b0:Thread-14)

[2016-12-13 14:46:12,872] INFO Store framework id: value: "44a35e16-dc32-4f91-afac-33dfff498944-0000"

(mesosphere.util.state.FrameworkIdUtil:Thread-14)

[2016-12-13 14:46:12,895] INFO Received reviveOffers notification: SchedulerRegisteredEvent (mesosphere.marathon.core.flow.impl.ReviveOffersActor:marathon-akka.actor.default-dispatcher-3)

[2016-12-13 14:46:16,658] INFO 10.0.2.2 - - [13/Dec/2016:14:46:16 +0000] "GET //localhost:7070/v2/deployments HTTP/1.1" 200 22 "http://localhost:7070/ui/" "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/54.0.2840.99 Safari/537.36" 14 (mesosphere.chaos.http.ChaosRequestLog$$EnhancerByGuice$$c1e74978:qtp1755811644-35)

[2016-12-13 14:46:16,660] INFO 10.0.2.2 - - [13/Dec/2016:14:46:16 +0000] "GET //localhost:7070/v2/groups?embed=group.groups&embed=group.apps&embed=group.apps.deployments&embed=group.apps.counts&embed=group.apps.readiness HTTP/1.1" 200 95 "http://localhost:7070/ui/" "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/54.0.2840.99 Safari/537.36" 15 (mesosphere.chaos.http.ChaosRequestLog$$EnhancerByGuice$$c1e74978:qtp1755811644-36)

[2016-12-13 14:46:16,666] INFO 10.0.2.2 - - [13/Dec/2016:14:46:16 +0000] "GET //localhost:7070/v2/queue HTTP/1.1" 200 32 "http://localhost:7070/ui/" "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/54.0.2840.99 Safari/537.36" 15 (mesosphere.chaos.http.ChaosRequestLog$$EnhancerByGuice$$c1e74978:qtp1755811644-34)

[2016-12-13 14:46:17,603] INFO Received TimedCheck (mesosphere.marathon.core.flow.impl.ReviveOffersActor:marathon-akka.actor.default-dispatcher-8)

[2016-12-13 14:46:17,604] INFO => revive offers NOW, canceling any scheduled revives (mesosphere.marathon.core.flow.impl.ReviveOffersActor:marathon-akka.actor.default-dispatcher-8)

[2016-12-13 14:46:17,609] INFO 2 further revives still needed. Repeating reviveOffers according to --revive_offers_repetitions 3 (mesosphere.marathon.core.flow.impl.ReviveOffersActor:marathon-akka.actor.default-dispatcher-8)

[2016-12-13 14:46:17,612] INFO => Schedule next revive at 2016-12-13T14:46:22.602Z in 4998 milliseconds, adhering to --min_revive_offers_interval 5000 (ms) (mesosphere.marathon.core.flow.impl.ReviveOffersActor:marathon-akka.actor.default-dispatcher-8)

Let us connect to the Marathon portal:

We have chosen Marathon to run on port 7070. In my case, the Docker host VirtualBox VM has a NATed interface only, so I need to open the Marathon port on VirtualBox:

![2016-12-13-14_59_36-regel-fur-port-weiterleitung]()

Now we can access the Marathon dashboard:

![2016-12-13-15_17_35-marathon]()

Let us see, whether Maraton is visible on Mesos:

![2016-12-13-15_44_39-mesos]()

Yes, the Marathon framework can be seen on the Mesos master; perfect.

![thumps_up_3]()

Now, our topology now looks like follows:

![2016-12-14-02_14_43-mesos-marathon-architecture-google-slides]()

However, we have not started any Marathon application yet. Let us do so now.

Step 6: Start a “Hello World” Application via Marathon Web Portal in a Mesos Container

Let us create an application running in a Mesos container on the Marathon web portal by clicking the ![Create Application]() button.

button.

We choose

- ID: while-loop-hello-world

- ID needs to consist of lowercase letters, digits, hyphens, “.”, “..”

- CPUs: 0.1

- If you keep the default of 1, then you might hit resource problems, if you have not 4 CPUs available for the 4 instances. In this case, one or more instances might be found in the “Waiting” state.

- Memory: 32 MiB

- 32 MiB is the minimum value supported

- Disk Space: 0

- Instances: 4

- Command:

while true; do echo "I am a Hello World script"; sleep 1; done 2>&1 1> this_is_my_output.log

- we have redirected the output of the script, because the STDERR/STDOUT retrieval does not work on the Marathon portal, currently (see Appendix A.5).

![2016-12-18-12_46_08-marathon]()

After less than 5 sec, we see, that all of the 4 instances are up and running:

![2016-12-18-12_46_16-marathon]()

On the Docker host, we can see the four instances running:

$ ps -ef | grep Hello | grep -v grep

root 14137 14095 0 11:46 ? 00:00:00 sh -c while true; do echo "I am a Hello World script"; sleep 1; done 2>&1 1> this_is_my_output.log

root 14138 14096 0 11:46 ? 00:00:00 sh -c while true; do echo "I am a Hello World script"; sleep 1; done 2>&1 1> this_is_my_output.log

root 14141 14116 0 11:46 ? 00:00:00 sh -c while true; do echo "I am a Hello World script"; sleep 1; done 2>&1 1> this_is_my_output.log

root 14142 14127 0 11:46 ? 00:00:00 sh -c while true; do echo "I am a Hello World script"; sleep 1; done 2>&1 1> this_is_my_output.log

We also can find the log files on the Docker host:

$ sudo find / -name this_is_my_output.log

/vagrant/jenkins_home/tmp/mesos/slaves/a06268a0-4cfd-4d76-94c7-fbdf053be0ba-S0/frameworks/44a35e16-dc32-4f91-afac-33dfff498944-0000/executors/while-loop-hello-world.927a5843-c517-11e6-ad85-02422b10e522/runs/0f079dcc-25e7-4ad6-b263-4761370a6173/this_is_my_output.log

/vagrant/jenkins_home/tmp/mesos/slaves/a06268a0-4cfd-4d76-94c7-fbdf053be0ba-S0/frameworks/44a35e16-dc32-4f91-afac-33dfff498944-0000/executors/while-loop-hello-world.9285a2e4-c517-11e6-ad85-02422b10e522/runs/44a5c7dd-59f3-4b59-8d94-33754a3cbdc3/this_is_my_output.log

/vagrant/jenkins_home/tmp/mesos/slaves/a06268a0-4cfd-4d76-94c7-fbdf053be0ba-S0/frameworks/44a35e16-dc32-4f91-afac-33dfff498944-0000/executors/while-loop-hello-world.92861815-c517-11e6-ad85-02422b10e522/runs/5400a1d9-582f-4d59-9b81-a719d21c60a1/this_is_my_output.log

/vagrant/jenkins_home/tmp/mesos/slaves/a06268a0-4cfd-4d76-94c7-fbdf053be0ba-S0/frameworks/44a35e16-dc32-4f91-afac-33dfff498944-0000/executors/while-loop-hello-world.9286db66-c517-11e6-ad85-02422b10e522/runs/f3145a9f-2c88-4f99-a8de-618a4157b9e8/this_is_my_output.log

And we can see the output:

$ sudo tail -F /vagrant/jenkins_home/tmp/mesos/slaves/a06268a0-4cfd-4d76-94c7-fbdf053be0ba-S0/frameworks/44a35e16-dc32-4f91-afac-33dfff498944-0000/executors/while-loop-hello-world.927a5843-c517-11e6-ad85-02422b10e522/runs/0f079dcc-25e7-4ad6-b263-4761370a6173/this_is_my_output.log

I am a Hello World script

I am a Hello World script

I am a Hello World script

I am a Hello World script

I am a Hello World script

...

![thumps_up_3]()

Step 7: Test of Application Resiliency

Step 7.1: Killing a Process and Testing, whether it was restarted automatically

Now, let us see, what happens, if one of the processes dies. For that, we just will kill one of the “Hello World” processes found on the Docker host:

(dockerhost)$ ps -ef | grep Hello | grep -v grep

root 14137 14095 0 11:46 ? 00:00:00 sh -c while true; do echo "I am a Hello World script"; sleep 1; done 2>&1 1> this_is_my_output.log

root 14138 14096 0 11:46 ? 00:00:00 sh -c while true; do echo "I am a Hello World script"; sleep 1; done 2>&1 1> this_is_my_output.log

root 14141 14116 0 11:46 ? 00:00:00 sh -c while true; do echo "I am a Hello World script"; sleep 1; done 2>&1 1> this_is_my_output.log

root 14142 14127 0 11:46 ? 00:00:00 sh -c while true; do echo "I am a Hello World script"; sleep 1; done 2>&1 1> this_is_my_output.log

(dockerhost)$ sudo kill -9 14137

(dockerhost)$ ps -ef | grep Hello | grep -v grep

root 14138 14096 0 11:46 ? 00:00:00 sh -c while true; do echo "I am a Hello World script"; sleep 1; done 2>&1 1> this_is_my_output.log

root 14141 14116 0 11:46 ? 00:00:00 sh -c while true; do echo "I am a Hello World script"; sleep 1; done 2>&1 1> this_is_my_output.log

root 14142 14127 0 11:46 ? 00:00:00 sh -c while true; do echo "I am a Hello World script"; sleep 1; done 2>&1 1> this_is_my_output.log

root 19818 19807 1 12:04 ? 00:00:00 sh -c while true; do echo "I am a Hello World script"; sleep 1; done 2>&1 1> this_is_my_output.log

We can clearly see that the process was killed successfully, and a new process was started automatically.

![thumps_up_3]()

Step 7.2: Find Traces of the killed Process in the Logs

The output log of the old process can still be retrieved:

$ cat -F /vagrant/jenkins_home/tmp/mesos/slaves/a06268a0-4cfd-4d76-94c7-fbdf053be0ba-S0/frameworks/44a35e16-dc32-4f91-afac-33

dfff498944-0000/executors/while-loop-hello-world.92861815-c517-11e6-ad85-02422b10e522/runs/5400a1d9-582f-4d59-9b81-a719d21c60a1/this_is_my_output.log

...

I am a Hello World script

I am a Hello World script

I am a Hello World script

I am a Hello World script

(EOF)

However, we cannot see any traces that something went wrong. So, how can we troubleshoot, if a process dies unexpectedly?

Here, we are: the Marathon portal is providing us with information on what happened:

![2016-12-18-13_20_47-marathon]()

![thumps_up_3]()

Step 8: Test: Resource Over-subsription not supported per Default?

In this test, we observe, what happens, if an application instance is exceeding the offered resource limits. For that , we will try to start four instances with 1 CPU core each, while the slave provides 2 CPUs and not the required 4 CPUs:

![2016-12-16-20_52_42-marathon]()

We press ![Create Application]() again and see that 2 of the 4 applications are started soon:

again and see that 2 of the 4 applications are started soon:

![2016-12-16-20_54_49-marathon]()

Two of the task instances were started successfully, and two instances are waiting for resources and are “Unscheduled”. This is the expected behavior:

![2016-12-16-21_03_00-marathon]()

![thumps_up_3]()

On the Mesos Dashboard, we can see that the offered 2 CPUs are used by two instances already, leaving no CPU resources for the other two instances:

![2016-12-17-16_27_55-mesos]()

Aurora vs. Marathon: Consider a situation, where you want to run high priority production applications with low priority development applications on the same hardware. Mesosphere Marathon does not provide any good answer to such situations. In case you hit such a situation, consider to using Apache Aurora instead of Mesosphere Marathon. Apache Aurora allows high priority application to preempt low priority applications.

Mesos: about hard-limit of CPU resources: Mesos’ CPU reservation feels similar to a hard assignment of resources (since over-subscription is not supported per default, as we have seen above), but under the hood, Mesos does not apply hard limits on the CPU usage, unless the Mesos slave has --cgroups_enable_cfs (CFS = Completely Fair Scheduler) enabled. See also the second answer on this StackOverflow question. For more information on over-subscription by Mesos, see this Apache Mesos Documentation page.

Conclusion: Per default, resource over-subscription is not allowed by Mesos. See this Apache Mesos Documentation page on more information about over-subscription.

Step 9: Start a “Hello World” Application via Marathon Web Portal in a Docker Container

In this step we learn how to run an application in a Docker container instead of a Mesos container. We will perform similar steps as in Step 6.

We choose

- ID: while-loop-hello-world-container-small

- ID needs to consist of lowercase letters, digits, hyphens, “.”, “..”

- CPUs: 0.1

- If you keep the default of 1, then you might hit resource problems, if you have not 4 CPUs available for the 4 instances. In this case, one or more instances might be found in the “Waiting” state.

- Memory: 32 MiB

- 32 MiB is the minimum value supported

- Disk Space: 0

- Instances: 4

- Command:

while true; do echo "I am a Hello World script in a Docker container"; sleep 1; done 2>&1 1> this_is_my_container_output.log

- we have redirected the output of the script, because the STDERR/STDOUT retrieval does not work on the Marathon portal, currently (see Appendix A.5).

- Docker: ubuntu:latest

We click “Create Application”

![2016-12-17-16_40_36-marathon]()

Choose a low number of CPUs (0.1 CPUs in my case), the lowest number of Memory and four instances, together with a while loop as command:

![2016-12-18-15_39_21-marathon]()

In the Docker Container tab, we choose an Ubuntu image.

![2016-12-17-16_51_26-marathon]()

First, we see “waiting”,

![2016-12-17-16_51_48-marathon]()

then we see that all four instances are “running”:

![2016-12-18-09_17_33-marathon]()

![2016-12-18-15_43_26-marathon]()

On the Docker host, we can see the four Docker containers that have been started:

$ docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

78e346ddb3eb ubuntu:latest "/bin/sh -c 'while tr" About an hour ago Up About an hour mesos-a06268a0-4cfd-4d76-94c7-fbdf053be0ba-S0.13aeacaa-20d5-4628-8e65-f82a0fc71724

809ad6513236 ubuntu:latest "/bin/sh -c 'while tr" About an hour ago Up About an hour mesos-a06268a0-4cfd-4d76-94c7-fbdf053be0ba-S0.c42d9e0c-f66e-42be-ac0e-46bc8f44e47b

de7523c00d41 ubuntu:latest "/bin/sh -c 'while tr" About an hour ago Up About an hour mesos-a06268a0-4cfd-4d76-94c7-fbdf053be0ba-S0.e7fcebfb-f301-4369-83eb-2782832d83a9

d3729dad1bed ubuntu:latest "/bin/sh -c 'while tr" About an hour ago Up About an hour mesos-a06268a0-4cfd-4d76-94c7-fbdf053be0ba-S0.e924e32b-521b-4708-b538-bc2973094718

The processes are:

$ ps -ef | grep Hello | grep -v grep

root 19306 19258 0 14:40 ? 00:00:00 docker -H unix:///var/run/docker.sock run --cpu-shares 102 --memory 33554432 -e MARATHON_APP_VERSION=2016-12-18T14:40:40.292Z -e HOST=openshift-installer-native-docker-compose -e MARATHON_APP_RESOURCE_CPUS=0.1 -e MARATHON_APP_RESOURCE_GPUS=0 -e MARATHON_APP_DOCKER_IMAGE=ubuntu:latest -e PORT_10000=31730 -e MESOS_TASK_ID=while-loop-hello-world-container-small.f27ecd8a-c52f-11e6-ad85-02422b10e522 -e PORT=31730 -e MARATHON_APP_RESOURCE_MEM=32.0 -e PORTS=31730 -e MARATHON_APP_RESOURCE_DISK=0.0 -e MARATHON_APP_LABELS= -e MARATHON_APP_ID=/while-loop-hello-world-container-small -e PORT0=31730 -e MESOS_SANDBOX=/mnt/mesos/sandbox -e MESOS_CONTAINER_NAME=mesos-a06268a0-4cfd-4d76-94c7-fbdf053be0ba-S0.e924e32b-521b-4708-b538-bc2973094718 -v /var/tmp/mesos/slaves/a06268a0-4cfd-4d76-94c7-fbdf053be0ba-S0/frameworks/44a35e16-dc32-4f91-afac-33dfff498944-0000/executors/while-loop-hello-world-container-small.f27ecd8a-c52f-11e6-ad85-02422b10e522/runs/e924e32b-521b-4708-b538-bc2973094718:/mnt/mesos/sandbox --net host --entrypoint /bin/sh --name mesos-a06268a0-4cfd-4d76-94c7-fbdf053be0ba-S0.e924e32b-521b-4708-b538-bc2973094718 ubuntu:latest -c while true; do echo "I am a Hello World script in a Docker container"; sleep 1; done 2>&1 1> this_is_my_container_output.log

root 19319 19280 0 14:40 ? 00:00:00 docker -H unix:///var/run/docker.sock run --cpu-shares 102 --memory 33554432 -e MARATHON_APP_VERSION=2016-12-18T14:40:40.292Z -e HOST=openshift-installer-native-docker-compose -e MARATHON_APP_RESOURCE_CPUS=0.1 -e MARATHON_APP_RESOURCE_GPUS=0 -e MARATHON_APP_DOCKER_IMAGE=ubuntu:latest -e PORT_10000=31873 -e MESOS_TASK_ID=while-loop-hello-world-container-small.f27e5859-c52f-11e6-ad85-02422b10e522 -e PORT=31873 -e MARATHON_APP_RESOURCE_MEM=32.0 -e PORTS=31873 -e MARATHON_APP_RESOURCE_DISK=0.0 -e MARATHON_APP_LABELS= -e MARATHON_APP_ID=/while-loop-hello-world-container-small -e PORT0=31873 -e MESOS_SANDBOX=/mnt/mesos/sandbox -e MESOS_CONTAINER_NAME=mesos-a06268a0-4cfd-4d76-94c7-fbdf053be0ba-S0.c42d9e0c-f66e-42be-ac0e-46bc8f44e47b -v /var/tmp/mesos/slaves/a06268a0-4cfd-4d76-94c7-fbdf053be0ba-S0/frameworks/44a35e16-dc32-4f91-afac-33dfff498944-0000/executors/while-loop-hello-world-container-small.f27e5859-c52f-11e6-ad85-02422b10e522/runs/c42d9e0c-f66e-42be-ac0e-46bc8f44e47b:/mnt/mesos/sandbox --net host --entrypoint /bin/sh --name mesos-a06268a0-4cfd-4d76-94c7-fbdf053be0ba-S0.c42d9e0c-f66e-42be-ac0e-46bc8f44e47b ubuntu:latest -c while true; do echo "I am a Hello World script in a Docker container"; sleep 1; done 2>&1 1> this_is_my_container_output.log

root 19320 19281 0 14:40 ? 00:00:00 docker -H unix:///var/run/docker.sock run --cpu-shares 102 --memory 33554432 -e MARATHON_APP_VERSION=2016-12-18T14:40:40.292Z -e HOST=openshift-installer-native-docker-compose -e MARATHON_APP_RESOURCE_CPUS=0.1 -e MARATHON_APP_RESOURCE_GPUS=0 -e MARATHON_APP_DOCKER_IMAGE=ubuntu:latest -e PORT_10000=31245 -e MESOS_TASK_ID=while-loop-hello-world-container-small.f2792838-c52f-11e6-ad85-02422b10e522 -e PORT=31245 -e MARATHON_APP_RESOURCE_MEM=32.0 -e PORTS=31245 -e MARATHON_APP_RESOURCE_DISK=0.0 -e MARATHON_APP_LABELS= -e MARATHON_APP_ID=/while-loop-hello-world-container-small -e PORT0=31245 -e MESOS_SANDBOX=/mnt/mesos/sandbox -e MESOS_CONTAINER_NAME=mesos-a06268a0-4cfd-4d76-94c7-fbdf053be0ba-S0.e7fcebfb-f301-4369-83eb-2782832d83a9 -v /var/tmp/mesos/slaves/a06268a0-4cfd-4d76-94c7-fbdf053be0ba-S0/frameworks/44a35e16-dc32-4f91-afac-33dfff498944-0000/executors/while-loop-hello-world-container-small.f2792838-c52f-11e6-ad85-02422b10e522/runs/e7fcebfb-f301-4369-83eb-2782832d83a9:/mnt/mesos/sandbox --net host --entrypoint /bin/sh --name mesos-a06268a0-4cfd-4d76-94c7-fbdf053be0ba-S0.e7fcebfb-f301-4369-83eb-2782832d83a9 ubuntu:latest -c while true; do echo "I am a Hello World script in a Docker container"; sleep 1; done 2>&1 1> this_is_my_container_output.log